|

There is no shortage of content about AI. There is an acute shortage of content that explains, at the level of mechanism, what the human’s job actually is when AI is doing the execution, and why that job is irreplaceable, not as a temporary limitation of current technology but as a permanent structural feature of how intelligence works.

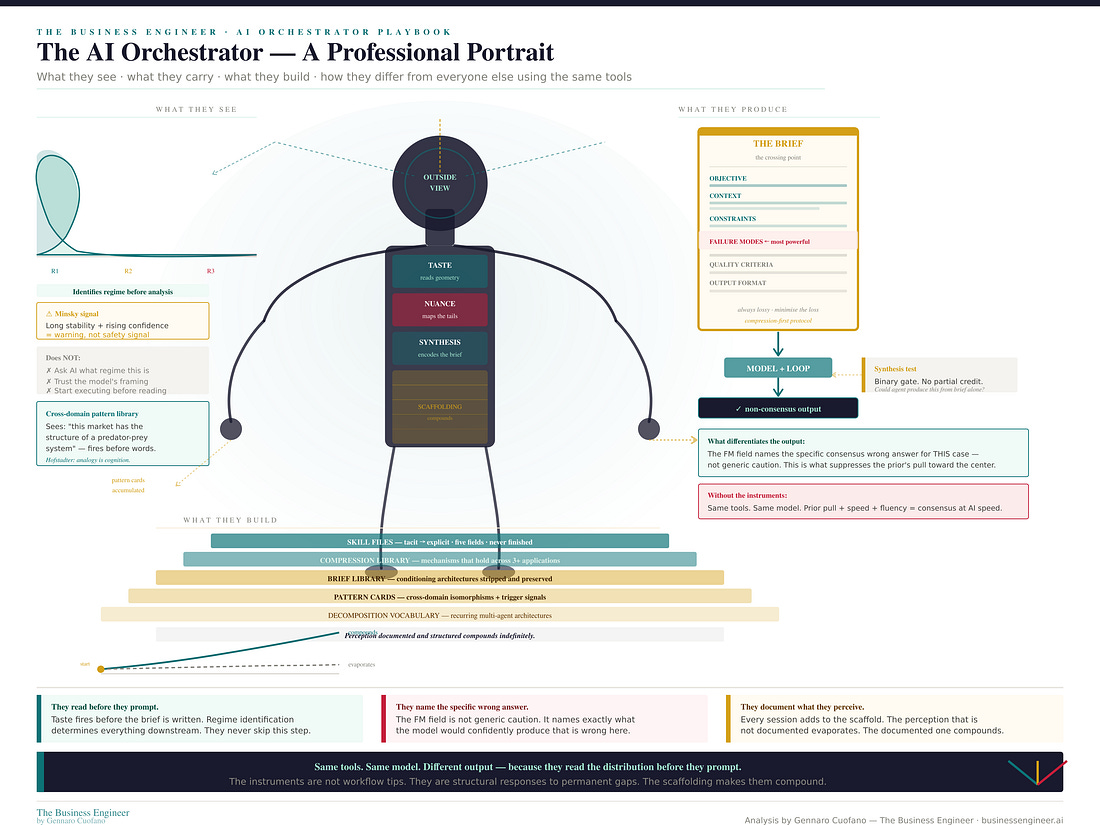

That job is orchestration. Not management, not supervision, not prompt engineering. Orchestration — in the precise sense that a conductor orchestrates an ensemble. The conductor does not play every instrument. They carry the interpretive judgment that determines what the ensemble produces. The musicians know their parts. The conductor knows how the whole should sound, which is knowledge of a completely different kind.

In the agentic economy, that is what the high-performing knowledge professional does. They shape what the agents produce. And the quality of that shaping is, structurally and permanently, a function of the quality of the orchestration.

The playbook is free for founding members. Reply to this to get access!

The Executive Plan now includes the Claude OS Skill embedded!

Part I · The Technical Foundation

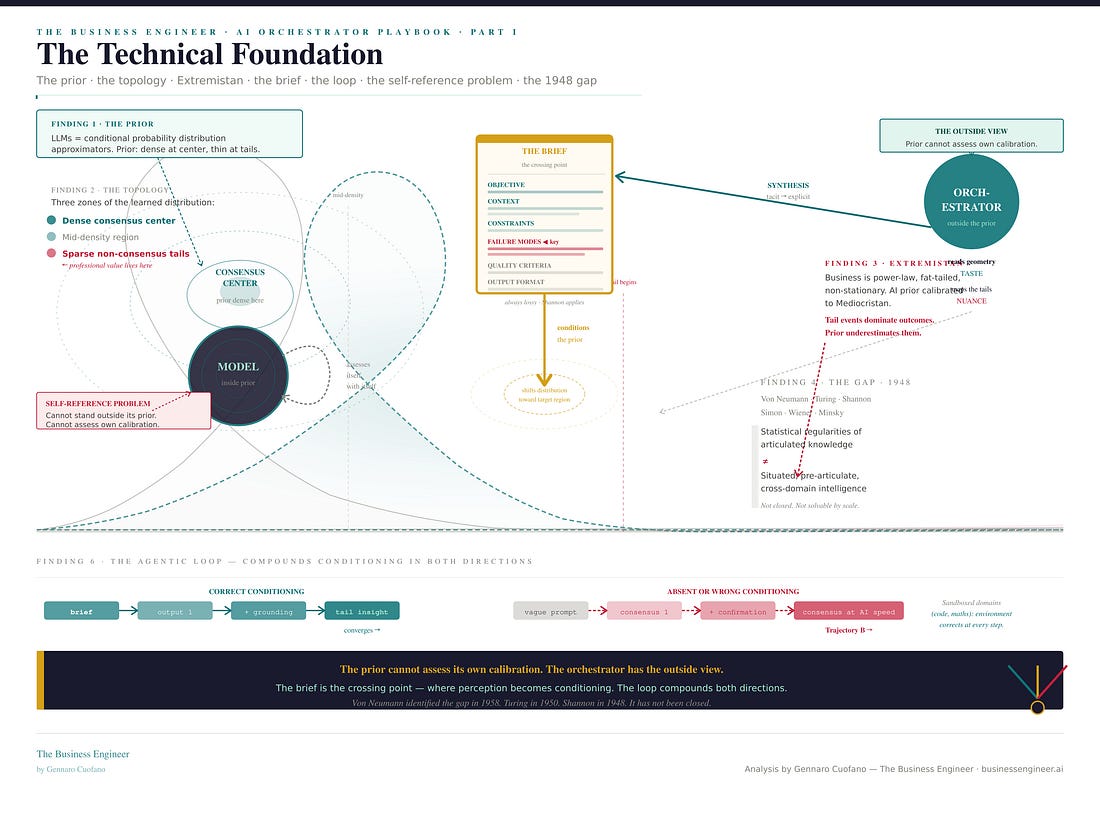

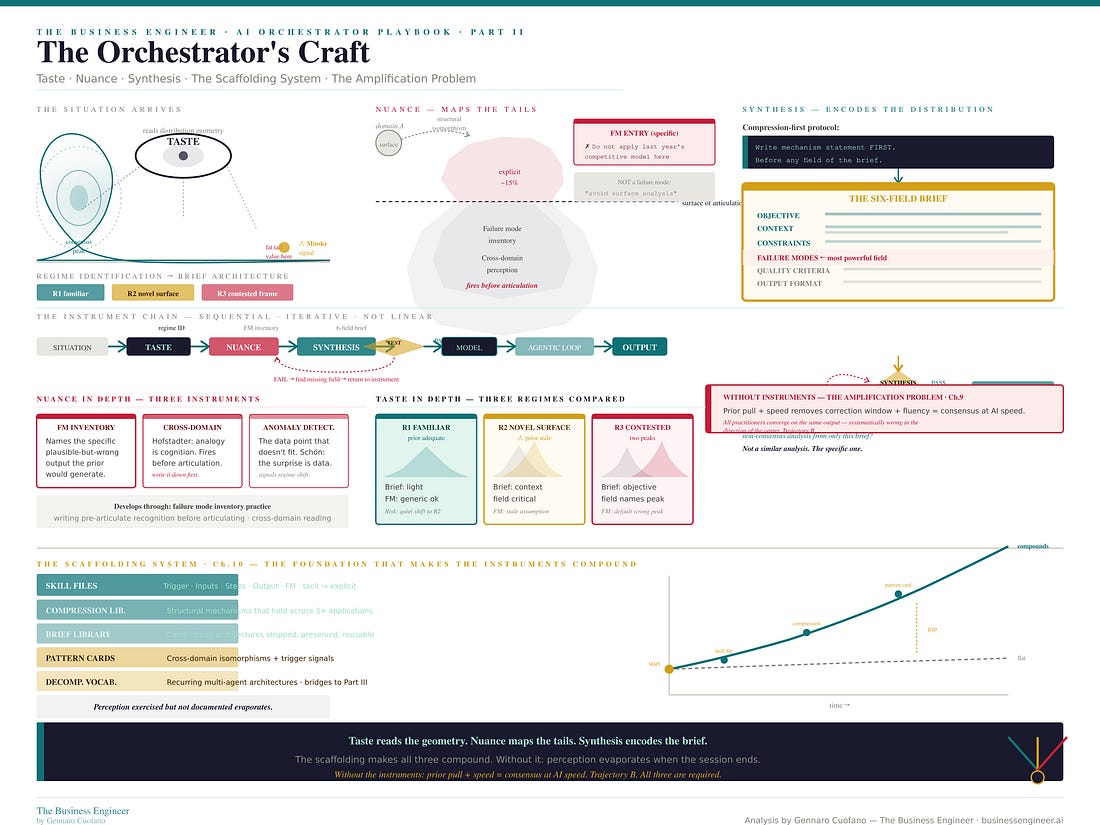

A language model is an approximator of conditional probability distributions trained on human-generated text. At inference time it samples from a learned distribution conditioned on the prompt. The prior — the model’s default distribution — is dense at the consensus center and thin at the non-consensus tails. This is not a flaw. It reflects the actual distribution of written human knowledge. The problem arises when the insight you need is in the tail, not the center.

The prompt is not an instruction to a deterministic system. It is a conditioning signal that shifts the probability distribution — narrowing it, focusing it, suppressing certain regions, amplifying others. The failure modes field of a brief, which most practitioners leave empty, is the most powerful conditioning element: it explicitly marks the high-density wrong region and directs the model away from it.

Business domains are Extremistan — Taleb’s term for domains governed by power-law, fat-tailed distributions where a single observation can dominate the dataset and the average is not predictive. AI priors are calibrated to Mediocristan — domains where the average is predictive and outliers are bounded. In Extremistan domains, a model optimized for average performance is systematically wrong in the direction of underestimating the tail events that actually dominate outcomes.

The agentic loop compounds initial conditioning in both directions:

Correct conditioning converges toward the right tail with each iteration

Absent or wrong conditioning diverges toward the consensus center — building more elaborate, more internally consistent, more confidently wrong analysis with every loop

In sandboxed domains like code and mathematics, the environment provides a ground-truth correction signal at every step. Outside sandboxed domains, the correction signal is the orchestrator’s situated judgment

Von Neumann, Turing, Shannon, Simon, Wiener, Rosenblatt, Minsky, and McCarthy all identified, from different starting points, the same structural gap: between the statistical regularities of articulated human knowledge and the situated, pre-articulate, cross-domain intelligence that navigates the real world. That gap was named in 1948. It has not been closed.

Polanyi’s formulation is still the sharpest: “We know more than we can tell.” The training corpus is the articulated surface of human knowledge. The distribution shape of any real domain lives mostly below it — in situated, tacit, pre-linguistic knowing that was never written down. The model learns from the surface. The orchestrator operates from below it.

Part II · The Orchestrator’s Craft

The three instruments are structural responses to three permanent gaps.

Taste — reading the geometry

Taste addresses the gap between the prior’s Mediocristan calibration and Extremistan domains. It is the capacity to read distribution geometry before a